While 3D Gaussian Splatting (3DGS) has enabled high-quality reconstruction of dynamic scenes, a persistent tension remains between Novel View Synthesis (NVS) fidelity and mesh geometric accuracy. We argue that this arises because deformation modeling, surface alignment, and appearance expressiveness compete for shared representational degrees of freedom. To mitigate this rendering–geometry trade-off, we propose Multi-Canonical Dynamic Gaussian Splatting.

Multi-Canonical Deformation Modeling (MCDM) partitions the sequence into localized temporal segments to reduce motion over-smoothing. Surface-Aligned Anisotropic Densification (SAAD) initializes 2D Gaussians with scales and rotations derived from local face geometry for more faithful surface support. Residual-Based Expressiveness Restoration (RBER) restores fine appearance details through sequential 3D and 2D residual refinement on top of a geometry-stabilized base. Experiments on DG-Mesh, D-NeRF, and Nerfies show that our method improves the rendering–geometry balance, yielding consistent gains in rendering quality while preserving or improving mesh accuracy.

Each video shows our reconstructed dynamic scene (Gaussian rendering + mesh) across time.

Girlwalk

Hook

Jumping Jacks

Stand Up

Drag the divider left or right to compare our method with DG-Mesh.

Horse

Beagle

Bird

Drag to rotate · Scroll to zoom · Right-drag to pan

Bird

drag / scroll / right-drag

Duck

drag / scroll / right-drag

T-Rex

drag / scroll / right-drag

Our pipeline integrates three core modules that progressively decouple the competing objectives in dynamic Gaussian reconstruction — temporal deformation span, geometry-support formation, and residual appearance modeling.

We compare against state-of-the-art dynamic reconstruction methods — DG-Mesh and Dynamic-2DGS — on the DG-Mesh and D-NeRF datasets. For each method, we show both the direct Gaussian Splatting (GS) rendering and the extracted mesh surface. DG-Mesh yields blurry renderings, and Dynamic-2DGS produces fragmented or incomplete surfaces (e.g., missing parts in Horse). Our method reconstructs sharp appearances with topologically clean meshes, achieving superior performance in both rendering fidelity and geometric accuracy.

We compare the baseline (single canonical space) against MCDM (multiple canonical spaces) across an extended temporal sequence (T = 0, …, 10). The baseline forces a single canonical representation to span the entire motion range, causing the deformation network to over-smooth time-specific structures, leading to blurred renderings and incoherent geometry. MCDM redistributes temporal modeling burden across K canonical sets. Since each canonical set covers only a localized segment, per-frame fidelity improves substantially, yielding sharper appearances and more coherent mesh reconstruction.

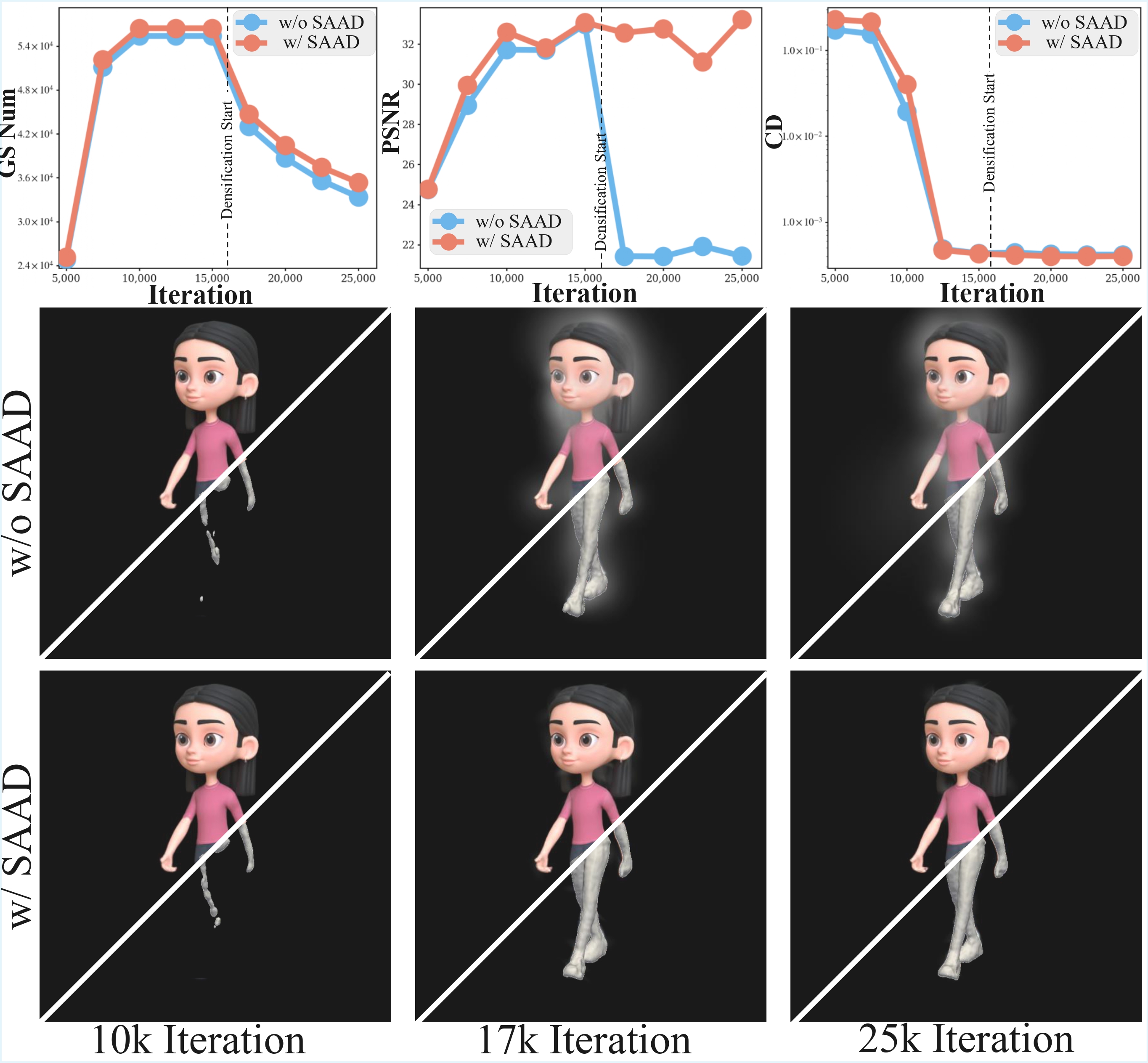

We compare isotropic densification (baseline) against our Surface-Aligned Anisotropic Densification (SAAD). Under mesh supervision, isotropic primitives inserted at face centroids ignore local surface anisotropy, progressively degrading rendering quality as they accumulate (visible blur at 10k → 25k iterations). SAAD derives the initial covariance of each new Gaussian from local face vertex statistics via eigendecomposition, producing surface-aligned primitives that preserve structural detail. The result is sharper boundaries and cleaner local structure throughout training.

@article{TODO,

author = {TODO},

title = {Multi-Canonical Dynamic Gaussian Splatting for Accurate Geometry and High-Fidelity Rendering},

journal = {TODO},

year = {2025}

}